How Your Brain Keeps You From Running Into Walls

- Published19 Sep 2019

- Author Nicoletta Lanese

- Source BrainFacts/SfN

Linda Henriksson spends her days spying on the human brain, watching signals zip through its wrinkles as it makes sense of the outside world. Various tools allow her to peek inside the brain without disturbing the head it’s held in — tools like a huge cylindrical machine that people slide inside while lying on a narrow table, and a device that resembles a massive hair dryer at a salon.

Henriksson wants to know how the brain extracts useful information from the light that hits our eyes. By showing images to volunteers and recording their brain activity, she hopes to find something that has eluded scientists: the neural computations that help us navigate our surroundings and keep us from running into walls.

In a matter of milliseconds, our brains calculate the geometry of each space we enter, letting us know where the floor meets the walls and walls meet the ceiling.

Certain hotspots in the brain light up at the sight of rooms, but scientists could never be sure which region specifically kept stock of walls and floors. With a clever combination of brain imaging and computer modeling, Henriksson cracked the case. The research could answer long-held questions about human perception.

An Enduring Mystery

Eminent philosophers and scholars from Plato to Descartes and beyond have noted how easily people seem to grasp geometrical relationships — the distance and angles between objections and their directional orientations. So easily, they reasoned, that people must possess an innate understanding of geometry.

Psychologists later suggested we possess mental maps that help us understand and move through space, while others proposed we learn by physically exploring our environments. It turns out it’s a little of both. And, humans aren’t the only ones who do it.

“All vertebrates — from fish, to birds, to humans — seem to share a common ability to navigate using environmental boundaries,” says cognitive neuroscientist Sang Ah Lee of the Korea Advanced Institute of Science and Technology. “This ability seems to be a built-in part of our cognitive tool-kit, and even young, untrained individuals like newly-hatched chicks or 18-month-old toddlers can do it on their first try.”

Disoriented rats use the boundaries of their environment to find their way back to hidden food. Zebrafish can return to the previous location of a fellow fish by following the walls of their tank. Animals use this strategy to orient themselves more than they use other visual cues like landmarks. And, the ability to navigate by boundary — which has also been observed in mice, chicks, monkeys, and humans — doesn’t emerge over time. It’s there at birth.

The Visual Complexity of Scenes

Making sense of scenes isn’t easy. Our retinas detect the light bouncing off walls, furniture, and people and generate a flurry of signals in response. Nerve cells funnel these messages to various regions of the brain for processing. The signals change constantly as our eyes wander through space, encountering new objects and scenes.

So how do our brains manage all this complexity? Basically — they don’t.

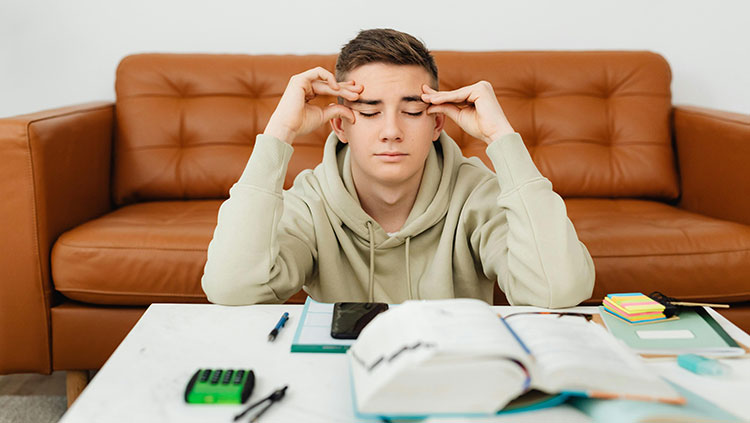

“We have a very limited capacity to perceive everything around us,” says Henriksson, who is a lecturer and researcher at Aalto University in Finland. Consider the sheer number of objects in just one room of your house: the living room. You may have a couch topped with pillows and blankets, a TV on a stand littered with remotes, a side table with a coffee cup placed on it, a rug on the floor, and a fan on the ceiling. Not to mention the walls, which you should avoid running into.

The brain can’t fixate on all the nuanced facets of a scene at once, so it prioritizes some kinds of data over others. Walls and boundaries just happen to make the cut.

Brain Barcodes

Since the 1990s, scientists have known that a couple areas of the brain help us process scenes. The most notable of these is a region at the back of the brain called the occipital place area (OPA). The parahippocampal place area (PPA) and retrosplenial complex (RSC) also respond robustly to scenes and react to boundaries like walls.

“What was not understood was the detailed content of representation in these areas, in terms of the patterns of activity and how they relate to different scenes people are viewing,” says Nikolaus Kriegeskorte, a neuroscientist at Columbia’'s Zuckerman Institute in New York and one of Henriksson’s collaborators.

Our brains encode our physical environment into distinct patterns of electrical signals a bit like barcodes. Just like a barcode’s pattern of thick and thin lines corresponds to the name, manufacturer, and price of a product, patterns of brain activity represent sensory details in the environment, such as colors, shapes, and textures. By recording volunteers’ brain activity as images of different scenes flashed across a screen, Kriegeskorte and Henriksson uncovered the barcodes that stand for scene layout.

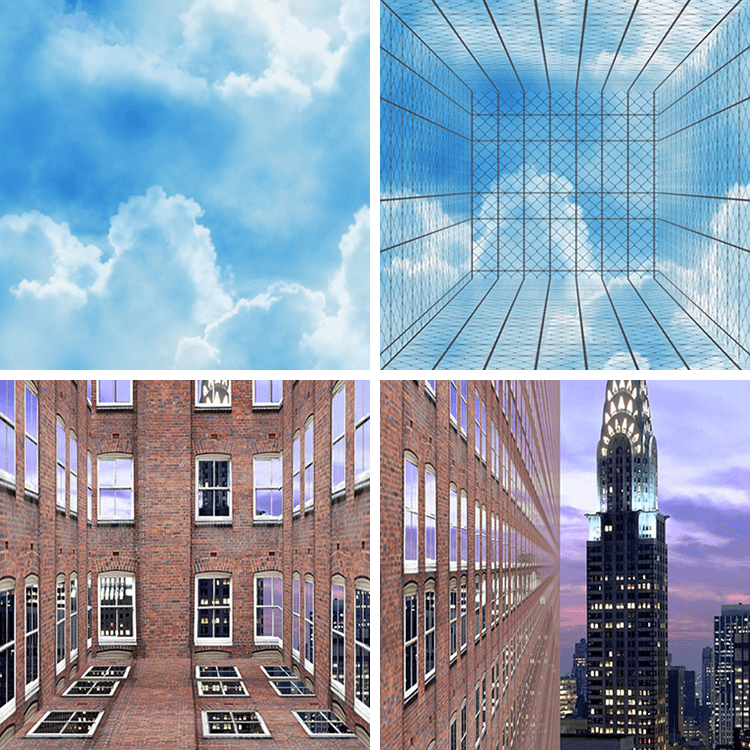

The scenes resembled either a windowless room, a windowed room overlooking a city, or metal fences against a cloudy sky. The bounding features of each scene — its walls, ceiling, and floor — toggled on and off at random.

As the scenes flickered by, the researchers found the OPA reliably encoded the boundaries of each room, regardless of its superficial appearance. The signals indicated which boundaries were present or absent and appeared just 100 milliseconds after the image popped on-screen.

By crafting a computer model from their data, the scientists were able to predict which scene a person was viewing just by looking at their pattern of brain activity. Barcodes from the OPA consistently coded for environmental boundaries, while the PPA patterns better predicted the “texture” of each room, indicating whether the scene was indoors or outdoors, for instance.

“It was quite striking that it was so clearly in the OPA that we encode layout across textures, and that the representation emerges so quickly,” says Henriksson.

Timing is Everything

The sheer speed at which the OPA encodes boundaries — 100 milliseconds, or faster than an average eyeblink — hints at their importance in visual perception.

“It seems as though layout is such an essential thing that we need to extract it from visual images immediately,” says Kriegeskorte.

To capture the lightning-fast brain activity, the researchers combined two imaging techniques: Magnetoencephalography to track, millisecond to millisecond, the magnetic fields generated by neurons’ electrical activity; and functional magnetic resonance imaging to monitor the rush of oxygenated blood toward active brain areas. This signal is a little slower but allows scientists to survey brain activity on a scale of mere millimeters.

To truly capture how we navigate, scientists must consider how our knowledge, memory, and attention warp visual perception.

The researchers found the OPA encodes boundaries as quickly as the brain processes faces. The brain allocates prime real estate to face perception and can recognize faces at just a glance, likely because faces play an important role in social interactions. The same seems to be true for scenes, with recent studies showing humans can both categorize scenes (kitchen or bedroom) and grasp their features like boundaries and navigability in only 100 milliseconds.

Beyond Basic Geometry

Henriksson incorporated realistic textures, like clouds and city skylines, to incite real-world scene processing in the lab. But of course, the real world isn’t 2-D.

“There is a limit to what we can infer from showing subjects 2-D graphic images,” says Lee. Our perception of space relies on our 3-D stereoscopic vision, she says. Static images grant scientists experimental control but come at the cost of naturalism. Henriksson plans to incorporate virtual reality in future experiments, allowing study participants to roam through digital 3-D environments as they would real ones.

To truly capture how we navigate, though, scientists must consider how our knowledge, memory, and attention warp visual perception.

“Perception is not just what comes into your eyes; it’s what you know about the world,” says cognitive neuroscientist Emiliano Macaluso of the Lyon Neuroscience Research Center in France. Your expectations and prior experience shape how visual information flows through the brain and how processing centers interact. Though the OPA may encode scene layout, its precise activity may depend on that of the whole network, he says.

Though we move room to room with little conscious effort, neuroscientists still have a lot to learn about how our brain keeps us from running into walls. How does the brain handle us walking through a scene, as objects and people move past and obscure our view? How do our prior experiences warp our perception? What happens when what we see doesn’t match our expectations?

Henriksson’s work brings scientists one step closer to answering these questions and solving the mystery of how our brain makes sense of a chaotic world.

CONTENT PROVIDED BY

BrainFacts/SfN

References

Ferrara, K., & Park, S. (2016). Neural representation of scene boundaries. Neuropsychologia, 89, 180–190. doi: 10.1016/j.neuropsychologia.2016.05.012

Foo, P., Warren, W. H., Duchon, A., & Tarr, M. J. (2005). Do humans integrate routes into a cognitive map? Map- versus landmark-based navigation of novel shortcuts. Journal of Experimental Psychology: Learning, Memory, and Cognition, 31(2), 195–215. doi: 10.1037/0278-7393.31.2.195

Greene, M. R., & Oliva, A. (2009). The Briefest of Glances: The Time Course of Natural Scene Understanding. Psychological Science, 20(4), 464–472. doi: 10.1111/j.1467-9280.2009.02316.x

Hari, R., Henriksson, L., Malinen, S., & Parkkonen, L. (2015). Centrality of Social Interaction in Human Brain Function. Neuron, 88(1), 181–193. doi: 10.1016/j.neuron.2015.09.022

Henriksson, L., Mur, M., & Kriegeskorte, N. (2019). Rapid Invariant Encoding of Scene Layout in Human OPA. Neuron, 103(1), 161-171.e3. doi: 10.1016/j.neuron.2019.04.014

Julian, J. B., Ryan, J., Hamilton, R. H., & Epstein, R. A. (2016). The Occipital Place Area Is Causally Involved in Representing Environmental Boundaries during Navigation. Current Biology, 26(8), 1104–1109. doi: 10.1016/j.cub.2016.02.066

Kriegeskorte, N., & Douglas, P. K. (2019). Interpreting encoding and decoding models. Current Opinion in Neurobiology, 55, 167–179. doi: 10.1016/j.conb.2019.04.002

Lee, S. A. (2017). The boundary-based view of spatial cognition: A synthesis. Current Opinion in Behavioral Sciences, 16, 58–65. doi: 10.1016/j.cobeha.2017.03.006

Lowe, M. X., Rajsic, J., Ferber, S., & Walther, D. B. (2018). Discriminating scene categories from brain activity within 100 milliseconds. Cortex, 106, 275–287. doi: 10.1016/j.cortex.2018.06.006

Macaluso, E. (2018). Functional Imaging of Visuospatial Attention in Complex and Naturalistic Conditions. In Current Topics in Behavioral Neurosciences (pp. 1–24). Retrieved from https://link.springer.com/chapter/10.1007/7854_2018_73

Pitcher, D., Walsh, V., & Duchaine, B. (2011). The role of the occipital face area in the cortical face perception network. Experimental Brain Research, 209(4), 481–493. doi: 10.1007/s00221-011-2579-1

Spelke, E., Lee, S. A., & Izard, V. (2010). Beyond Core Knowledge: Natural Geometry. Cognitive Science, 34(5), 863–884. doi: 10.1111/j.1551-6709.2010.01110.x