The Foundations of Language Processing

- Reviewed1 May 2023

- Author Marissa Fessenden

- Source BrainFacts/SfN

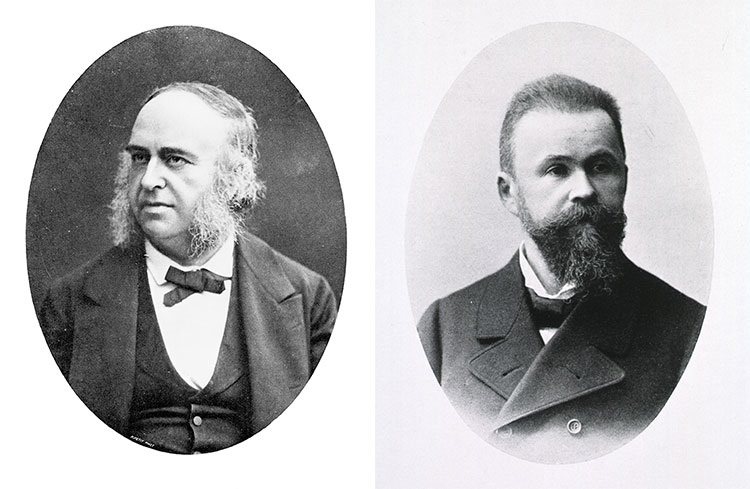

In mid-19th century France, a young man named Louis Victor Leborgne came to live at the Bicêtre Hospital in the suburbs south of Paris. Oddly, the only word he could speak was a single syllable: “Tan.” In the last few days of his life, he met a physician named Pierre Paul Broca. Conversations with the young man, whom the world came to know as Patient Tan, led Broca to understand that Leborgne could comprehend others’ speech and was responding as best he could, but “tan” was the only expression he was capable of uttering.

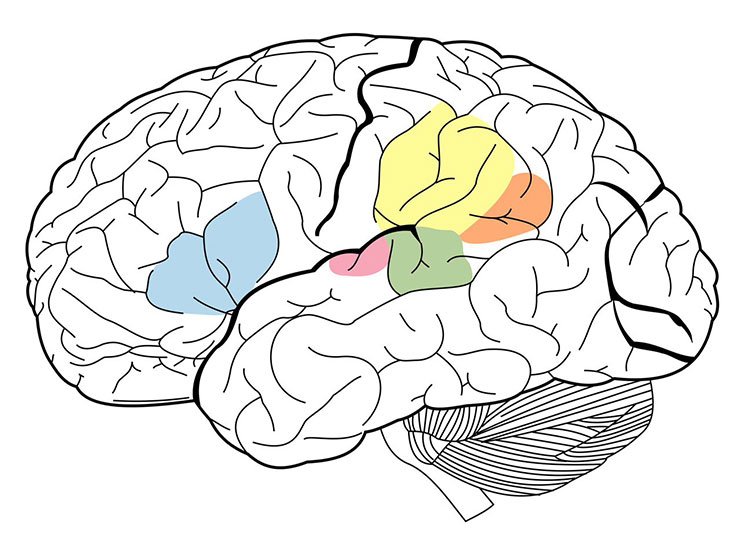

After Leborgne died, Broca performed an autopsy and found a large, damaged area, or lesion, in a portion of the frontal lobe. Since then, we have learned that damage to particular regions within the left hemisphere produces specific kinds of language disorders, or aphasias. The portion of the frontal lobe where Leborgne’s lesion was located is now called Broca’s area and is vital for speech production. Further studies of aphasia have greatly increased our knowledge about the neural basis of language.

Broca’s aphasia is also called “non-fluent” aphasia because speech production is impaired but comprehension is mostly intact. Damage to the left frontal lobe can produce non-fluent aphasias, in which speech output is slow and halting, requires great effort, and often lacks complex words or sentence structure. But while their speaking is impaired, non-fluent aphasics still comprehend spoken language, although their understanding of complex sentences can be poor.

Shortly after Broca published his findings, a German physician, Carl Wernicke, wrote about a 59-year-old woman he referred to as S.A., who had lost her ability to understand speech.

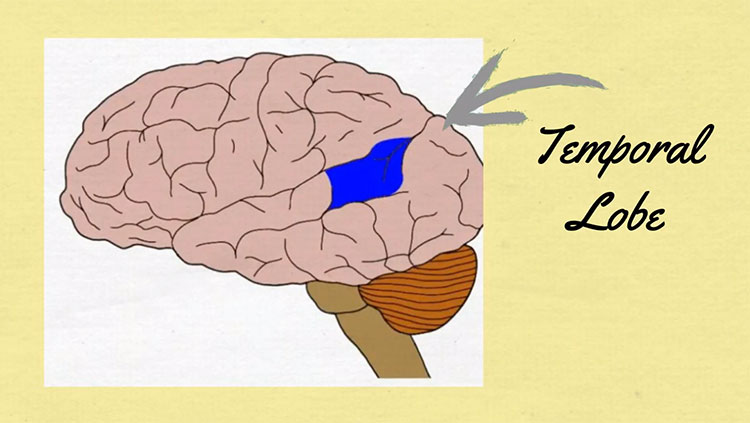

Unlike patient Leborgne, S.A. could speak fluently, but her utterances made no sense: She offered absurd answers to questions, used made-up words, and had difficulty naming familiar items. After her death, Wernicke determined that she had damage in her left temporal lobe, now known as Wernicke’s area. This caused her difficulty in comprehending speech, but not producing it, a deficit that is now known as “Wernicke’s aphasia,” or “fluent aphasia.” Fluent aphasic patients might understand short individual words, and their speech can sound normal in tone and speed, but it is often riddled with errors in sound and word selection and tends to be unintelligible.

Another type of aphasia is called “pure word deafness,” which is caused by damage to the superior temporal lobes in both hemispheres. Patients with this disorder are unable to comprehend heard speech on any level. But they are not deaf. They can hear speech, music, and other sounds, and can detect the tone, emotion, and even the gender of a speaker. But they cannot link the sound of words to their corresponding meanings. (They can, however, make perfect sense of written language, because visual information bypasses the damaged auditory comprehension area of the temporal lobe.)

Although Broca’s and Wernicke’s work emphasized the role of the left hemisphere in speech and language ability, scientists now know that recognizing speech sounds and individual words actually involves both the left and right temporal lobes. Nonetheless, producing complex speech is strongly dependent on the left hemisphere, including the frontal lobe as well as posterior regions in the temporal lobe. These areas are critical for accessing appropriate words and speech sounds.

Reading and writing involve additional brain regions — those controlling vision and movement. Sensory processing of written words utilizes connections between the brain’s language regions and the areas that process visual perception. In the case of reading and writing, many of the same centers involved in speech comprehension and production are still essential but require input from visual areas that analyze the shapes of letters and words, as well as output to the motor areas that control the hand.

Insights in Language Research

Although our understanding of how the brain processes language is far from complete, molecular genetic studies of inherited language disorders have provided important insights. One language-associated gene, called FOXP2, codes for a special type of protein that switches other genes on and off in particular parts of the brain. Rare mutations in FOXP2 can result in FOXP2-related speech and language disorder: a condition characterized by apraxia of speech, or difficulty coordinating mouth and jaw movements in the sequences required for speech. The disorder is also accompanied by difficulty with spoken and written language starting in early childhood.

Imaging studies have revealed that disruption of FOXP2 can severely affect signaling in the dorsal striatum, part of the basal ganglia located deep in the brain. Specialized neurons in the dorsal striatum express high levels of the product of FOXP2. Mutations in FOXP2 interrupt the flow of information through the striatum and result in speech deficits. These findings show the gene’s importance in regulating signaling between motor and speech regions of the brain. Changes in the nucleotide sequence of FOXP2 might have influenced the development of spoken language in humans and explain why humans speak and chimpanzees do not.

Remarkably, many insights into human speech have come from studies of birds, where it is possible to induce genetic mutations and study their effects on singing. Just as human babies learn language during a special developmental period, baby birds learn their songs by imitating a vocal model (a parent or other adult bird) during an early critical period. Like babies’ speech, birds’ song-learning also depends on auditory feedback — their ability to hear their own attempts at imitation. Interestingly, studies have also revealed that FOXP2 mutations can disrupt song development in young birds, much as they do in humans.

Functional imaging studies have also identified brain structures not previously associated with language processing. For example, portions of the middle and inferior temporal lobe participate in accessing the meaning of words. In addition, the anterior temporal lobe is being investigated as a potential player in sentence-level comprehension. Researchers have also identified a sensory-motor circuit for speech in the left posterior temporal lobe, which is thought to aid communication between the systems for speech recognition and production. This circuit is involved in speech development and is likely to support verbal short-term memory.

Adapted from the 8th edition of Brain Facts by Marissa Fessenden.

CONTENT PROVIDED BY

BrainFacts/SfN

References

Anaki, D., Kaufman, Y., Freedman, M., & Moscovitch, M. (2007). Associative (prosop)agnosia without (apparent) perceptual deficits: a case-study. Neuropsychologia, 45(8), 1658–1671. https://doi.org/10.1016/j.neuropsychologia.2007.01.003

Barense M. D., Warren. J. D., Bussey, T. J., Saksida, L. M. (2016) Oxford Textbook of Cognitive Neurology & Dementia, Chapter 4: The temporal lobes. https://academic.oup.com/book/24555/chapter-abstract/187755187?redirectedFrom=fulltext

Best, J. R., & Miller, P. H. (2010). A developmental perspective on executive function. Child development, 81(6), 1641–1660. https://doi.org/10.1111/j.1467-8624.2010.01499.x

Binder, J. R., Desai, R. H., Graves, W. W., & Conant, L. L. (2009). Where is the semantic system? A critical review and meta-analysis of 120 functional neuroimaging studies. Cerebral cortex (New York, N.Y.: 1991), 19(12), 2767–2796. https://doi.org/10.1093/cercor/bhp055

Florence Bouhali, F., Thiebaut de Schotten, M., Pinel, P., Poupon, C., Mangin, J. F., Dehaen, S., & Cohen, L. (2014). Anatomical Connections of the Visual Word Form Area. Journal of Neuroscience, 34(46) 15402-15414. https://doi.org/10.1523/JNEUROSCI.4918-13.2014

Buchsbaum, B. R., Hickok, G., Humphries, C. (2001). Role of left posterior superior temporal gyrus in phonological processing for speech perception and production. Cognitive Sci, 25, 663-678. http://www.sciencedirect.com/science/article/pii/S0364021301000489

Campbell, M. E., & Cunnington, R. (2017). More than an imitation game: Top-down modulation of the human mirror system. Neuroscience and Biobehavioral Reviews, 75, 195–202. https://doi.org/10.1016/j.neubiorev.2017.01.035

Centelles, L., Assaiante, C., Nazarian, B., Anton, J. L., & Schmitz, C. (2011). Recruitment of both the mirror and the mentalizing networks when observing social interactions depicted by point-lights: a neuroimaging study. PloS One, 6(1), e15749. https://doi.org/10.1371/journal.pone.0015749

Charpentier, C. J., De Neve, J. E., Li, X., Roiser, J. P., & Sharot, T. (2016). Models of Affective Decision Making: How Do Feelings Predict Choice? Psychological Science, 27(6), 763–775. https://doi.org/10.1177/0956797616634654

Dixon, M. L., & Christoff, K. (2014). The lateral prefrontal cortex and complex value-based learning and decision making. Neuroscience and Biobehavioral Reviews, 45, 9–18. https://doi.org/10.1016/j.neubiorev.2014.04.011

Domanski C. W. (2013). Mysterious "Monsieur Leborgne": The mystery of the famous patient in the history of neuropsychology is explained. Journal of the History of the Neurosciences, 22(1), 47–52. https://doi.org/10.1080/0964704X.2012.667528

Domenech, P. & Koechlin, E. (2014). Executive control and decision-making in the prefrontal cortex. Curr Opin Behav Sci, 1, 101-106.

http://www.sciencedirect.com/science/article/pii/S2352154614000278

Doré, B. P., Zerubavel, N., Ochsner, K. N. (2015). Social cognitive neuroscience: A review of core systems. In Mikulincer, M., Shaver, P. R., Borgida, E., & Bargh, J. A. (Eds.), APA Handbook of Personality and Social Psychology, Vol. 1. Attitudes and social cognition (pp. 693–720). American Psychological Association. https://doi.org/10.1037/14341-022

Frederick R. (2014). Testing for executive function in gibbons. Proceedings of the National Academy of Sciences of the United States of America, 111(13), 4738. https://doi.org/10.1073/pnas.1401589111

Hickok G. (2009). The functional neuroanatomy of language. Physics of Life Reviews, 6(3), 121–143. https://doi.org/10.1016/j.plrev.2009.06.001

Huth, A. G., de Heer, W. A., Griffiths, T. L., Theunissen, F. E., & Gallant, J. L. (2016). Natural speech reveals the semantic maps that tile human cerebral cortex. Nature, 532(7600), 453–458. https://doi.org/10.1038/nature17637

Konopka, G., & Roberts, T. F. (2016). Insights into the Neural and Genetic Basis of Vocal Communication. Cell, 164(6), 1269–1276. https://doi.org/10.1016/j.cell.2016.02.039

MedlinePlus [Internet]. (2023). FOXP2-related speech and language disorder. Bethesda (MD): National Library of Medicine (US). https://medlineplus.gov/genetics/condition/foxp2-related-speech-and-language-disorder/

Mzuguchi N., Nakata, H., Kanosue, K. (2016) The right temporoparietal junction encodes efforts of others during action observation. Sci Reports, 6, 30274. https://www.nature.com/articles/srep30274

Peelle J. E. (2012). The hemispheric lateralization of speech processing depends on what "speech" is: a hierarchical perspective. Frontiers in Human Neuroscience, 6, 309. https://doi.org/10.3389/fnhum.2012.00309

Price C. J. (2012). A review and synthesis of the first 20 years of PET and fMRI studies of heard speech, spoken language and reading. NeuroImage, 62(2), 816–847. https://doi.org/10.1016/j.neuroimage.2012.04.062

Soutschek, A., Sauter, M., & Schubert, T. (2015). The Importance of the Lateral Prefrontal Cortex for Strategic Decision Making in the Prisoner's Dilemma. Cognitive, Affective & Behavioral Neuroscience, 15(4), 854–860. https://doi.org/10.3758/s13415-015-0372-5

Spunt, R. P., Satpute, A. B., & Lieberman, M. D. (2011). Identifying the what, why, and how of an observed action: an fMRI study of mentalizing and mechanizing during action observation. Journal of Cognitive Neuroscience, 23(1), 63–74. https://doi.org/10.1162/jocn.2010.21446