Attention: An Eye Opening Story

- Published1 Nov 2011

- Reviewed1 Nov 2011

- Author Aalok Mehta

- Source BrainFacts/SfN

New blogs pop up every second. Each minute, more than 48 hours of video are uploaded to YouTube. With digital technology so commonplace, it is easy to drown in information. For researchers studying visual attention, however, this is an old story. For decades, they have investigated how the brain manages and triages its own overwhelming data stream. Neuroscientists estimate that up to 100 megabits of information flow into each eye every second, comparable to the fastest broadband connections.

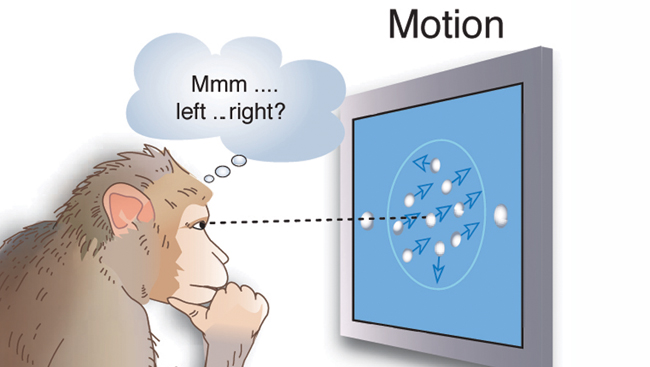

Researchers study visual attention using a technique that tracks a monkey’s visual focus and the resulting neural response.

Modified and reprinted by permission from Macmillan Publishers, Ltd: Nature Neuroscience, 9(7)861-863, 2006.

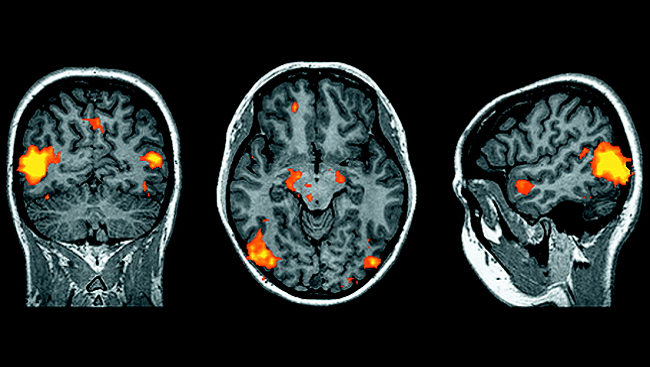

Brain cells in the lateral intraparietal area respond when an object captures a monkey’s attention. The graph shows how the cells respond when a known object (blue) or a distractor (red) appear in their visual field.

Courtesy, with permission: James W. Bisley and Michael E. Goldberg, Journal of Neurophysiology 2006, 95: 1696–1717.

Now, using sophisticated new techniques — including complex computer simulations and live brain imaging — neuroscientists have begun to piece together a more complete picture of how we decide where to look.

Monkey See, Monkey Do

How does the brain select precisely which part of the world to focus on? In recent years, visual attention researchers have benefited greatly from single-cell recording techniques, which use tiny electrodes to track the activity of particular brain cells. Because individual cells in visual processing areas often respond to specific aspects of a visual scene, this technique allows researchers to closely track how the brain makes sense of the outside world.

Animal research has been vital to addressing this research question. Neuroscientists have developed an array of sophisticated techniques to study the responses of individual neurons in monkeys. These techniques can be used as the monkeys perform tasks that require focusing on important objects, while ignoring useless objects that appear and disappear and might distract the monkeys from performing the task well.

Using such tools, SfN Past President Michael E. Goldberg and others learned how the brain forms a visual “priority map.” According to the priority map theory, the brain determines the relative importance of different objects and then orders the eyes to focus on whichever rates the highest.

The brain processes information about an object’s importance in two ways: “bottom-up” and “top-down.” In “bottom-up” processing, the brain weighs an object’s importance based on its inherent characteristics. Greater importance is assigned to features that signify something noteworthy, such as sudden movements, unusual color patterns, and strange shapes. In “top-down” processing, the brain weighs an object’s importance based on previous experience — for instance, discounting brightly colored shirts in a crowded train while looking for the door. Researchers found bottom-up processes are faster than the more effort-intensive top-down ones.

Although visual information travels through several circuits once it reaches the brain, one brain region that may be particularly important in visual attention is the lateral intraparietal area. Goldberg and others showed that brain activity in this region indicates when an object has captured a monkey’s attention.

Visual Bull’s-Eye

Brain scientists continue to fill in many of the fine details of how visual attention works. Once an object has been viewed, brain cells representing its area of the visual field become inhibited (harder to activate). These findings suggest the things we look at are temporarily marked as unimportant, so we do not continually fixate on the same thing — an idea summarized as “inhibition of return.” Goldberg and others also have scrutinized “surround suppression,” in which visual areas adjacent to an important object are inhibited. Such an effect enhances slight differences between areas of the visual field, highlighting motion and other unusual features and making object recognition easier.

Attention goes awry in many human disorders, such as attention deficit disorder, schizophrenia, and Alzheimer’s disease. Understanding its basic nature may provide strategies for better diagnosis and treatment of these disorders. In addition, attention findings may help engineers and computer scientists make progress on elusive technologies, including face-scanning and video-search software. Moreover, this research helps address a paradox of human vision: only two percent of the world falls onto the fovea — the sensitive eye cells responsible for sharp vision — but we perceive the world continuously and in vivid detail. With further research, scientists may be able to crack the mystery of how attention, eye movement, and experience combine to transform spotty data into silky smooth high-definition.

CONTENT PROVIDED BY

BrainFacts/SfN