Language and the Brain: What Makes Us Human

- Published1 Feb 2011

- Reviewed1 Feb 2011

- Author Aalok Mehta

- Source BrainFacts/SfN

No other species on the planet uses language or writing — a mystery that remains unsolved even after thousands of years of research. Now neuroscientists are taking advantage of powerful new ways to peer into the brain to provide remarkable insights into this unique human ability.

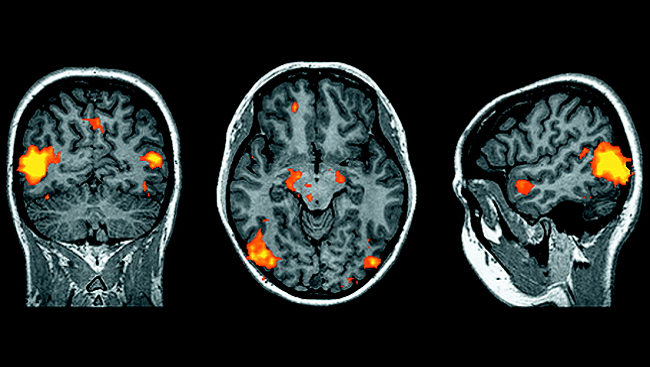

Brain imaging studies have shown that at least five regions, including Broca’s area in the inferior frontal gyrus and Wernicke’s area in the superior temporal gyrus, are involved in speech.

Do you trip over your words, struggle to listen to a dinner companion in a noisy restaurant, or find it difficult to understand a foreign accent on TV? Help may be on the way. Using powerful new research tools, scientists have begun to unravel the long-standing mystery of how the human brain processes and understands speech.

In some ways, language is one of the oldest topics in human history, fascinating everyone from ancient philosophers to modern computer programmers. This is because language helps make us human. Although other animals communicate with one another, we are the only species to use complex speech and to record our messages through writing. This newly invigorated field, known as the neurobiology of language, helps scientists:

- Gain important insights into the brain regions responsible for language comprehension.

- Learn about underlying brain mechanisms that may cause speech and language disorders.

- Understand the “cocktail party effect,” the ability to focus on specific voices against background noise.

Researchers began to identify the brain regions associated with language in the last 200 years by studying people who developed speech problems after they sustained brain injuries. Such studies led to the discovery that two parts of the brain — known as Broca's and Wernicke's areas — were vital to understanding speech and writing. But progress was slow, because studying speech in healthy people was difficult and no reliable animal models could provide useful clues.

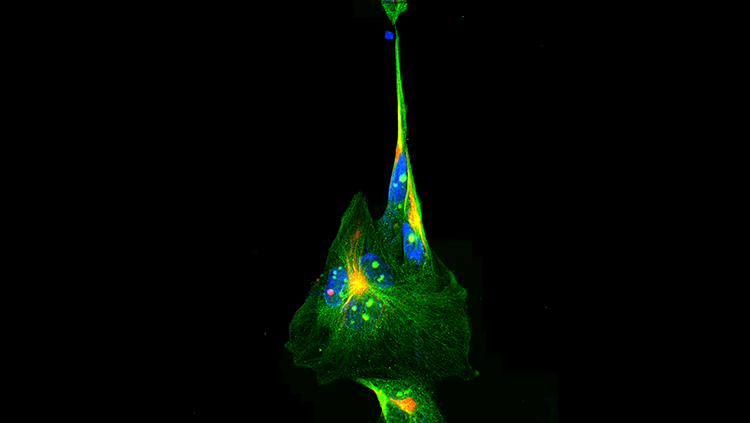

That has changed in the last 20 years. The development of scanning methods has allowed researchers to examine the brains of healthy, awake people. Functional magnetic resonance imaging shows which brain areas are active at any given time, while diffusion tensor imaging lets scientists trace how regions of the brain are connected.

Recent imaging studies reveal that understanding speech requires multiple brain areas. Speech comprehension spans a large, complex network involving at least five regions of the brain and numerous interconnecting fibers. Research suggests this process is more complicated and requires more brainpower than previously thought.

New techniques have been essential for greater insight into speech disorders, such as stuttering. Stuttering affects about one in 20 children, although about 80 percent eventually grow out of it. Once thought to be purely a stress response, the condition has now been linked to abnormalities in brain connections. Stuttering can also be inherited, and scientists have identified at least three genes that may contribute to the condition.

More recently, researchers found people who stutter show unusual brain activity when listening to sentences, reading silently, and reading silently while someone else is reading aloud. Such findings may mean stuttering is likely not caused by a problem with the physical process of speech but with something else, such as planning what to say.

In another study, scientists found that adults who stutter had abnormal brain connections, including fewer links between regions for planning and executing actions. Additionally, women who stutter showed different patterns of brain connections than men. This difference may explain why more men suffer from chronic stuttering, even though approximately equal numbers of boys and girls initially develop the condition.

But brain imaging studies can only go so far. They lack the resolution to investigate individual brain cells. That is why language researchers have turned to one useful animal to model human speech: birds. Songbirds learn to sing much like humans learn to speak. They have another similarity, as any early riser knows: put many of them in a small space and they get noisy as they try to be heard over one another.

This type of overlapping cacophony baffles computers and hearing aids, but healthy humans and birds show a remarkable ability to focus on the voice they want to hear. Researchers recently discovered that songbirds have brain cells that “turn on” in response to particular song notes but not random sounds — whether those notes are present with noise or not. The ability to deal with the cocktail party effect appears to be an essential part of how the brain processes incoming sound.

Many questions remain about how language processing works in the brain. The human brain reshapes itself over time, so whether the changes seen were present early in life or developed over many years is still unclear, particularly in those who stutter. On a larger level, scientists puzzle over why language has become such an important part of our lives, but not the lives of closely related species, such as chimpanzees.

Interest in the neurobiology of language continues to grow rapidly. Brain scientists recognize the potential importance of their findings. If they are successful, they may help answer the oldest question of all: what makes us human?

CONTENT PROVIDED BY

BrainFacts/SfN