Vision: It all Starts with Light

- Published1 Apr 2012

- Reviewed1 Apr 2012

- Source BrainFacts/SfN

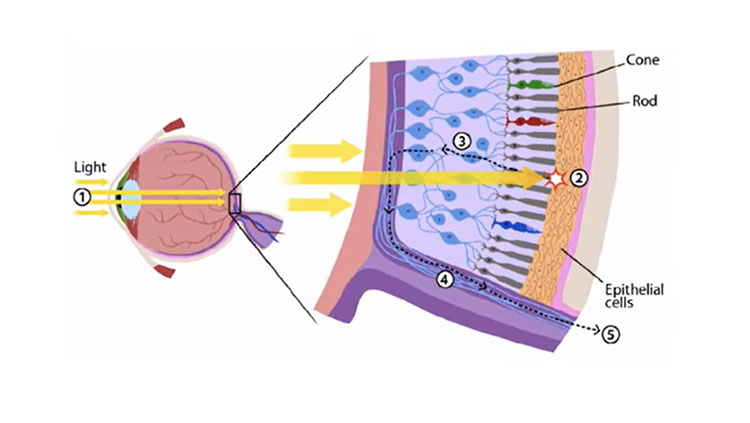

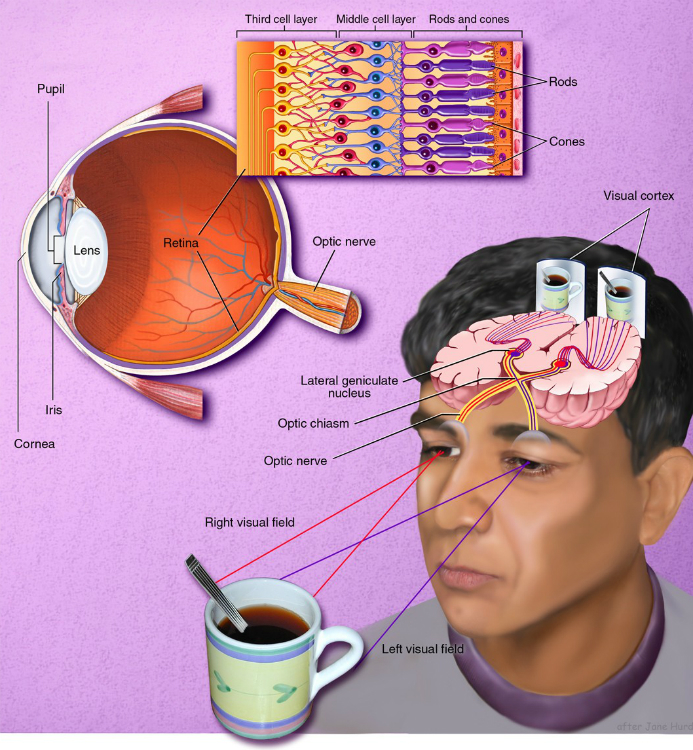

To be able to see anything, eyes first need to process light. Vision begins with light passing through the cornea, which does about three-quarters of the focusing, and then the lens, which adjusts the focus. Both combine to produce a clear image of the visual world on a sheet of photoreceptors called the retina, which is part of the central nervous system but located at the back of the eye.

Photoreceptors gather visual information by absorbing light and sending electrical signals to other retinal neurons for initial processing and integration. The signals are then sent via the optic nerve to other parts of brain, which ultimately processes the image and allows us to see.

As in a camera, the image on the retina is reversed: Objects to the right of center project images to the left part of the retina and vice versa; objects above the center project to the lower part and vice versa.

The size of the pupil, which regulates how much light enters the eye, is controlled by the iris. The shape of the lens is altered by the muscles just behind the iris so that near or far objects can be brought into focus on the retina.

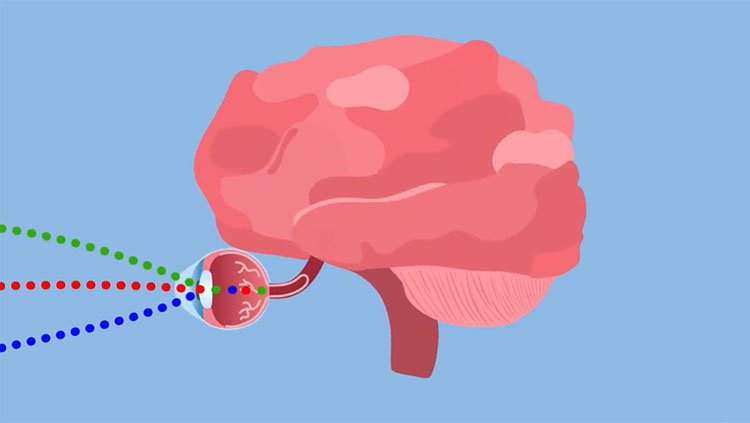

Primates, including humans, have well-developed vision using two eyes, called binocular vision. Visual signals pass from each eye along the million or so fibers of the optic nerve to the optic chiasm, where some nerve fibers cross over. This crossover allows both sides of the brain to receive signals from both eyes.

When you look at a scene with both eyes, the objects to your left register on the right side of the retina. This visual information then maps to the right side of the cortex. The result is that the left half of the scene you are watching registers in the cerebrum’s right hemisphere. Conversely, the right half of the scene registers in the cerebrum’s left hemisphere. A similar arrangement applies to movement and touch: Each half of the cerebrum is responsible for processing information received from the opposite half of the body.

Scientists know much about the way cells encode visual information in the retina, but relatively less about the lateral geniculate nucleus — an intermediate way station between the retina and visual cortex — and the visual cortex. Studies about the inner workings of the retina give us the best knowledge we have to date about how the brain analyzes and processes sensory information.

Photoreceptors, about 125 million in each human eye, are neurons specialized to turn light into electrical signals. Two major types of photoreceptors are rods and cones. Rods are extremely sensitive to light and allow us to see in dim light, but they do not convey color. Rods constitute 95 percent of all photoreceptors in humans. Most of our vision, however, comes from cones that work under most light conditions and are responsible for acute detail and color vision.

The human eye contains three types of cones (red, green and blue), each sensitive to a different range of colors. Because their sensitivities overlap, cones work in combination to convey information about all visible colors. You might be surprised to know that we can see thousands of colors using only three types of cones, but computer monitors use a similar process to generate a spectrum of colors. The central part of the human retina, where light is focused, is called the fovea, which contains only red and green cones. The area around the fovea, called the macula, is critical for reading and driving. Death of photoreceptors in the macula, called macular degeneration, is a leading cause of blindness among the elderly population in developed countries, including the United States.

The retina contains three organized layers of neurons. The rod and cone photoreceptors in the first layer send signals to the middle layer (interneurons), which then relays signals to the third layer, consisting of multiple different types of ganglion cells, specialized neurons near the inner surface of the retina. The axons of the ganglion cells form the optic nerve. Each neuron in the middle and third layer typically receives input from many cells in the previous layer, and the number of inputs varies widely across the retina.

Near the center of the gaze, where visual acuity is highest, each ganglion cell receives inputs — via the middle layer — from one cone or, at most, a few, allowing us to resolve very fine details. Near the margins of the retina, each ganglion cell receives signals from many rods and cones, explaining why we cannot see fine details on either side. Whether large or small, the region of visual space providing input to a visual neuron is called its receptive field.

CONTENT PROVIDED BY

BrainFacts/SfN