How Powerful Illusions Reveal Coding In Your Brain

- Published19 May 2016

- Reviewed13 May 2016

- Source BrainFacts/SfN

Your eyes can play tricks on you, and visual illusions take advantage of these glitches in our perception. Studying these glitches can tell us a lot about how brain cells code for things like color or motion, as shown in this video by Stanford University graduate student Guillaume Riesen. The video took second place in the 2015 Brain Awareness Video Contest.

Want to see your video here? Learn more about the Brain Awareness Video Contest and start planning your submission today!

CONTENT PROVIDED BY

BrainFacts/SfN

Transcript

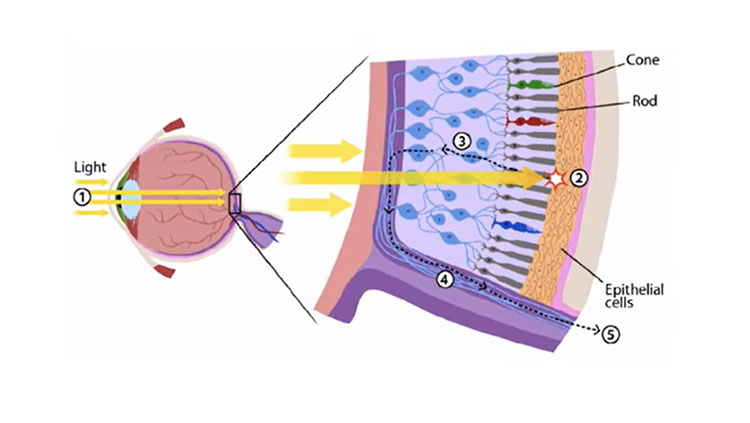

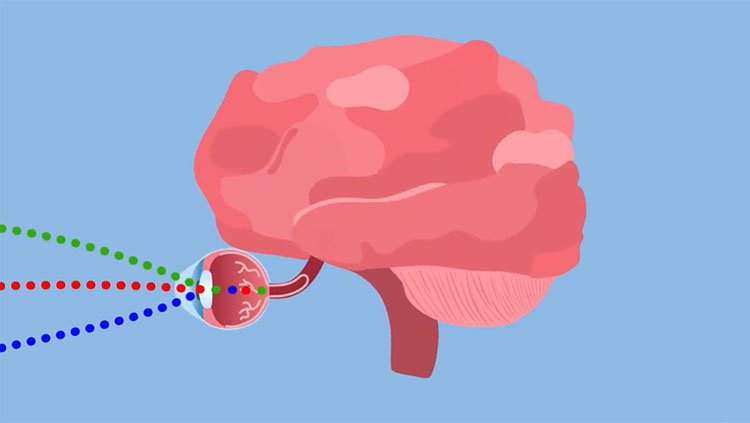

Evolution has found a much less expensive solution to this problem. Rather than having lots of very selective receptors, our eyes actually use a small number of widely-tuned units. These respond most strongly to their preferred colors, and less and less to more distant ones (beeEEEEeeep). So rather than having a purely ‘red’ sensor, there’s one that responds more strongly to red than any other color. It turns out that the human eye only needs three detectors to cover this entire spectrum - you may have heard of the ‘red, green and blue cones’. Generally speaking, the human eye has these three color detectors spread all across the visual field and uses their relative activity to tell which colors are where. If the blue cone is more active than the other two, you’re seeing something blue. If the blue and green cones are more active than the red, you’re seeing cyan. And if all of the cones are equally active, you’re seeing gray or white. So all of the colors you see on this scale are a result of different combinations of activity in just those three channels.

This system works great most of the time, but because it relies on relative activity it can fail if something upsets the balance of your detectors. It turns out that this is possible, and actually quite easy to do! The trick is that the detectors effectively get tired over time. So if you’re looking at something red for long enough, your red detectors are going “RED! RED! Red! Red. red….” While the green and blue detectors are just kind of relaxing. So if you then look at something white - where normally all three channels would be equally active - the green and blue guys are ready to jump into action. The red channel, however, is still a bit tired out and so it’ll respond less. Your brain then reads the relative activities of these three channels and concludes that you’re seeing more blue and green than red. So suddenly instead of seeing white, you’re seeing cyan! If you look at any color for long enough, your color system gets selectively fatigued so that it then gives a false reading in the opposite direction afterwards. This is called an afterimage. Stare at this cross here for a bit. The picture you’re looking at is a photo I took, with the colors inverted. In a moment I’m going to switch to a completely black and white version. Don’t move your eyes when I do. Rather than seeing the black and white, you’ll be seeing the opposite of what you’re now looking at - and you’ll get [flip] the original colors back! There is absolutely no color in this photo, but for a short while you should see a full-color image that only exists in your brain.

This kind of coding is not unique to color perception. My favorite example actually involves motion. When you stare at the center of this video, each part of your eye is seeing a particular type of motion. The center of your vision is expanding, so whatever is responsible for detecting that is getting fatigued. When you look away in a moment, those detectors will then be tired out and you’ll have a bias in your motion perception system. As a result, even if what you’re looking at is not moving, your brain will infer that there’s something going on in the world that’s moving opposite to what you’re now looking at. This is called a motion aftereffect. Alright, now that you’ve looked long enough look down at your keyboard. Isn’t that fantastic? Even though everything is staying absolutely still, you should have the impression of some kind of motion going on out in the world. And that’s a result of having biased your motion perception system. Both of these illusions give us insight to what the brain is actually doing. It seems to be using the relative activity of a population of detectors to decide what it’s seeing, rather than reading off the activity of any one unit. This is called population coding. Population coding is very efficient, but it is susceptible to these kinds of glitches anytime you look at the same thing for long enough to tire out some subset of your detectors.

Even seemingly abstract things like amounts of objects appear to be encoded this way. If you stare at the center of this display, the left part of your vision is somehow seeing something like ‘few’ while the right part is seeing ‘many’. In a moment, I’m going to switch the display so that both sides have the same amount of dots. If the brain is using the same kind of system as we’ve just explored with color and motion, we’ll expect that you’ll see the opposite from what you were just looking at. This effect is more subtle than the ones I’ve shown you so far, so you’ll have to pay close attention to your impression right as I switch. So here we go - which side looks like it has more? Most people will see the left side as having more dots than the right - even though closer inspection will show that they’re in fact mirror images! Your perception has been biased by looking at that previous stimulus. This is exactly what we’d predict from a system based on population coding.

The best way to learn about a system is often to study its glitches, and as you can see human vision can be fairly glitchy! By coming up with models that predict the same quirks we ourselves experience, we can gain insight into how the brain actually works.